This article is authored by Rachel Storer with the mentorship of Sarah M. Tashjian and is a part of the 2018 pre-graduate spotlight week.

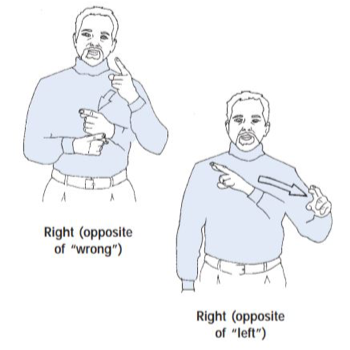

It wasn’t until 1960 that linguists began to consider sign language a language separate from spoken language (Stokoe, 1960). Many linguists believed that sign language was a signed version of the spoken language of whichever country a deaf person lived in; for example, linguists thought that American Sign Language (ASL) was a signed version of English. (Perlmutter, 1989). However, in the 1960’s linguists began to realize that sign language has the same aspects that make spoken language a language. For instance, sign language has its own structure, grammar, and each sign has its own meanings that are independent from that of spoken language. Although it has been almost 60 years since linguists began to consider sign language its own language, individuals still have the incorrect perception that signs are just a gesture that represents a spoken word. An easy way to illustrate why this is incorrect is to think of English words that have two different meanings. For instance, the English word “right” has two different meanings. If sign language, in this case American Sign Language (ASL), was a gesture form of English then there would be one sign for “right” that is used to convey both of the meanings for the spoken word “right”. However, there is a sign for each of the meanings of the word “right” in ASL, just as they are expressed by two different words in other languages.

Two distinct signs in American Sign Language that illustrate the two meanings of the word “right”.

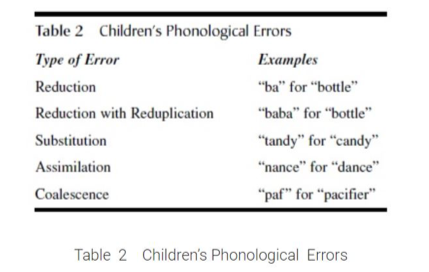

With the acceptance of sign language as an independent language, linguists began studying how language develops in deaf children. Two prominent linguists, B.F. Skinner and Noam Chomsky, proffered competing theories on contemporary language development. Skinner argued that language was developed through environmental input, where children would be positively reinforced when they made a correct word-meaning association (Lemetyinen, 2012). The following is an example of Skinner’s theory: A child says the word milk while pointing at a glass of milk; the child’s mother positively affirms that the child is pointing at milk and gives the milk to the child, positively reinforcing the child’s language and associations. Chomsky on the other hand argued that there is Universal Grammar whereby children have an innate, biological understanding of grammatical categories (e.g., nouns and verbs) and that language acquisition is learning the words of the language that comprise these categories (Lemetyinen, 2012). Moreover, Chomsky’s theory suggests that children instinctively know how to combine a noun and a verb to create a meaningful and correct phrase (Ambridge & Lieven, 2011). Although there is still debate regarding theories of language development, researchers have come to a consensus that most hearing children acquire language in stages (see table 1) and during each stage children produce certain predictable errors (see table 2).

Retrieved from: http://psychology.iresearchnet.com/developmental-psychology/language-development/

Retrieved from: http://psychology.iresearchnet.com/developmental-psychology/language-development/

Even though deaf children learn language in a similar manner to that of hearing children (i.e. they create similar errors to that of hearing children) (Bellugi & Klima, 1991), deaf children often also face language impoverishment, something that most hearing children do not (except in extreme cases) (Meadow, 1968). Deaf children who are born to hearing parents often suffer from language impoverishment due to a lack of language input during critical periods of development (see Schick & Moeller, 1992; Mayberry et al., 2001; Geers & Schick,1988; & Mayberry, 2002). Deaf children who have impoverished language input as young children often show delays in cognitive and achievement domains such as reading skills (Chamberlain, 2001). Importantly, cognitive and reading skill delays can be overcome. For example, these findings suggest that promoting factors that improve language comprehension regardless of whether or not the child is deaf or hearing also promote reading skills (Chamerlain, 2001).

Studies have found that deaf children who learn sign language from a young age also go through the same stages of language acquisition as hearing children (Bellugi & Klima, 1991). Deaf children even make the same errors that hearing children do at or around the same age that they occur in hearing children (Bellugi & Klima, 1991). This discovery led researchers to look at different aspects that are correlated to language development in deaf children and compare them to these correlates in hearing children. These correlates include aspects like cognitive and achievement outcomes such as academic performance, reading competence, speech acquisition, breadth of vocabulary, and theory of mind (the ability to attribute mental states—beliefs, intents, desires, emotions, knowledge, etc.—to oneself, and to others, and to understand that others have beliefs, desires, intentions, and perspectives that are different from one’s own) (Mayberry, 2002). Because deaf children do not acquire language skills in the traditional way (hearing), prior thought was that deaf children would have a deficit of these skills as they developed. However, results from research studying language skills in both hearing and deaf children illustrate that deafness does not cause disparity in the above aspects of language development and understanding (Allen & Schoem, 1997). Like hearing children, economic status, language enrichment in the home, and early language exposure are some examples that cause disparity in language development in deaf children (Allen & Schoem, 1997). Studies have found that one of the main reasons for these deficits in deaf children is a lack of early language exposure, again not the fact that the children are deaf (Chamberlain & Mayberry, 2000; Mayberry et al., 2001).

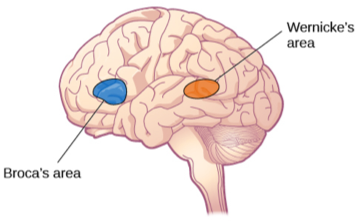

Beyond understanding the links between language acquisition and performance outcomes, researchers are also interested in whether processing sign language is represented differently from spoken language in the brain. Conventional understanding of language and language processing is that these functions primarily rely on the left hemisphere of the brain. However, language processing in the deaf population is less clear. The extension of research on language development to deaf populations varies with the type of test used. For example, Neville and colleagues found that native ASL signers, both deaf and hearing, showed activation in the expected left hemisphere brain regions such as Broca’s Areas and Wernicke’s area while processing ASL sentences (Neville, Bavelier, Corina et al., 1998). However, in a different study using a semantic memory task (i.e. classification of items into categories) and an episodic memory task (i.e. word recognition), they found deaf signers showed activation in the right hemisphere, more specifically the right visual association areas, but that hearing speakers showed activation in the left hemisphere when performing the task in speech (Rönneberg, Söderfeldt & Risberg, 1998). These conflicting results illustrate the need for further research as well as attention to the types of tests used to probe language during these studies.

Retrieved from: https://brocasaphasia.wordpress.com/

It is currently unknown how the language impoverishment that often happens with children who are born deaf or become deaf at a very young age affects brain development and organization. Although some studies have suggested that children who use sign language still show a left hemispheric preference while processing language, there have been conflicting studies that suggest the right hemisphere is equally or even more active during language processing (Mayberry, 2002). This disparity in research illustrates the importance of standardizing research methods and tests for studying and understanding how sign language relates to brain development. Understanding this relation could lead us to having a better understanding of language and how it develops for deaf children by comparing it to the knowledge we have about the relationship between language development and brain development in hearing children.

Despite the continued need for a better understanding of language acquisition in deaf children, there have been considerable developments in the study of deafness in the last few decades. No longer is the primary form of teaching deaf children in school through oral methods (Mayberry, 2002), and no longer is sign language thought to inhibit language development and academic performance in children who are deaf or hard of hearing. Slowly, old ideas of deafness and sign language are becoming obsolete and we can thank the growing body of research that allows us to understand that although deafness and sign language are considered to be atypical compared to that of the general population, the development of language in a deaf child is not.

Thank you

———-

Rachel Storer is currently a lab manager in Dr. Adriana’s Developmental Neuroscience Lab at UCLA and helps with aspects of studies that are led by her collaborators. She graduated from UCSD in June 2017 with a B.S. in Clinical Psychology and a minor in Cognitive Science. She wishes to pursue a PhD in Clinical Psychology where she hopes to study individual differences in behavior in individuals with anxiety and depression using neuroimaging techniques and computer tasks; ultimately applying these findings to more precise treatments for individuals with these mental illnesses. The inspiration for her article came from learning American Sign Language in high school and college and a conversation she had with her current mentor Dr. Galvan about language development in children who are deaf and its relationship to brain development in these children.

———-

References

Allen TE, Schoem SR: Educating deaf and hear-of-hearing youth: What works best? Paper

presented at the Combined Otolaryngological Spring Meetings of the American Academy of Otolaryngology, Scottsdale, AZ, May 1997.

Ambridge, B., & Lieven, E.V.M. (2011). Language Acquisition: Contrasting theoretical

approaches. Cambridge: Cambridge University Press.

Bellugi, U. and Klima, E. (1991). What the Hands Reveal About the Brain. In Martin, D.S. (Ed)

Advances in Cognition, Education and Deafness.

Chamberlain C, Mayberry RI: Do the deaf ’see’ better? Effects of deafness on visuospatial skills.

Poster presented at TENNET V, Montreal, May 1994.

Chamberlain C: Reading skills of deaf adults who use American Sign Language: Insights from

word recognition studies. Unpublished doctoral dissertation. McGill University, Montreal, 2001.

Geers A, Schick B (1988. Acquisition of spoken and signed English by hearing-impaired

children of hearing-impaired or hearing parents. Journal of Speech and Hearing Disorders: 53; 136– 143.

Lemetyinen, H. (2012). Language acquisition. Retrieved from

www.simplypsychology.org/language.html

Mayberry, RI. (2002). Cognitive development in deaf children: the interface of language and

perception in neuropsychology. In S.J. Segalowitzand I. Rapin (Eds), Handbook of Neuropsychology, 8 (2), 71-107.

Mayberry R.I., Chamberlain C, Waters G, Doehring D. (2001). Reading development in relation

to sign language structure and input. Manuscript in preparation.

Meadow, K. P. (1968). Early manual communication in relation to the deaf child’s intellectual,

social, and communicative functioning. American Annals of the Deaf, 113, 29–41.

Neville H.J., Bavelier D, Corina D, Rauschecker J, Karni A, Lalwani A, Braun A, Clark V,

Jezzard P, Turner R (1998). Cerebral organization for language in deaf and hearing subjects: Biological constraints and effects of experience. Proceedings of National Academy Sciences, 95, 922–929.

Perlmutter, David M. “The Language of the Deaf.” New York Review of Books, March 28,

1991, pp. 65-72.

Rönneberg J, Söderfeldt B, & Risberg J (1998). Regional cerebral blood flow during signed and

heard episodic and semantic memory tasks. Applied Neuropsychology: 5, 132–138, 1998.

Schick B, & Moeller M.P. (1992). What is learnable in manually coded English sign systems? Applied Psycholinguistics: 3, 313–340.

Stokoe, WC. (1960). Sign language structure: An outline of the visual communications systems

of the American deaf. Journal of Deaf Studies and Deaf Education, 10, 4-37.